According to Google’s SRE book, “At least 50% of each SRE’s time should be spent on engineering project work that will either reduce future toil or add service features. Feature development typically focuses on improving reliability, performance, or utilization, which reduces toil as a second-order effect. We share this 50% goal because toil expands if left unchecked and can quickly fill 100% of everyone’s time. The work of reducing toil and scaling up services is the “Engineering” in Site Reliability Engineering. Engineering work is what enables the SRE organization to scale up sublinearly with service size and to manage services more efficiently than either a pure Dev team or a pure Ops team.”

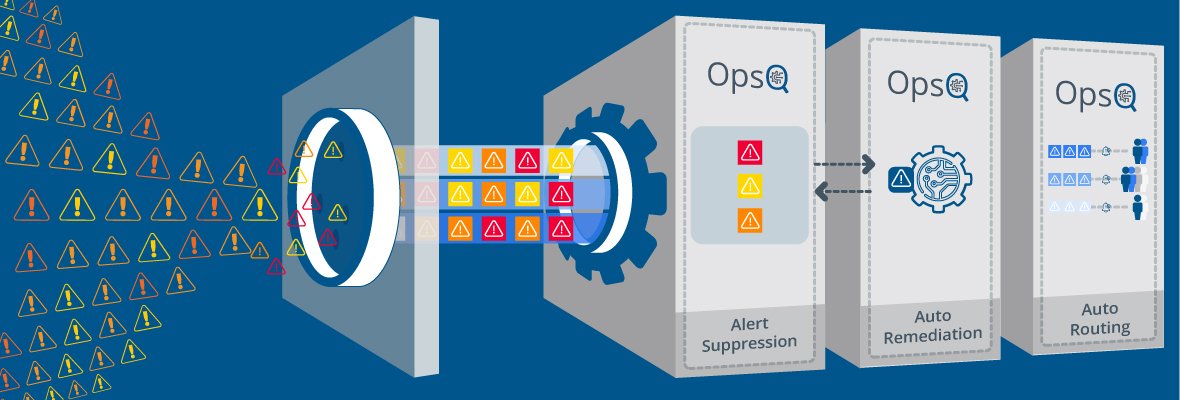

A major challenge for an SRE team when optimizing activities between feature development and reducing toil is managing the alert noise. False alarms often lead to resources being pulled away from important issues that must be addressed immediately. The alert can be noisy for the SRE team if

- It doesn’t provide substantial information about an event that occurred

- It doesn’t provide enough information about any future event that may affect the end-user.

In such cases, the alert is considered not worthy or actionable by the SRE engineer. It just contributes to killing the time of the engineer and distracting them from their routine tasks.

Setting the right Threshold.

To reduce alert fatigue and noise, you need to set the right thresholds for triggering alerts. You also need to decide which of these alerts are most important for sending into your on-call platform. Your IT monitoring system can work on more than 200 or 300 parameters. You are not required to set alerts for all the parameters or metrics. You just need to set meaningful alerts, which are important for your system reliability and availability. You can collect the data from other parameters as non-alerting and use it for pre-emptive incident analysis. Only alerts that require immediate action should trigger your system to send notifications. Other alerts can be just saved or recorded somewhere to provide meaningful context.

Ready to experience the full power of cloud technology?

Our cloud experts will speed up cloud deployment, and make your business more efficient.

Alert Flapping

Sometimes alerts flap or quickly switch from Ok to alert mode. This can be easily managed by modifying the threshold limits or conditions. Flapping usually occurs owing to a sudden increase in user behaviour during high activity time of the day. For example, if you have set your CPU alerts at 60% but you see it flap in the range of 59.8% or 60.8% during high activity times of day regularly. You can set the threshold value to 61% or even 62%. Setting up incremental alerts is also beneficial. If you set an alert for CPU usage at 85%, you would know that something is not right with the system anytime the alert is triggered.

Joining or merging similar incidents alerts

You have set an alert rule that would assess your disk usage every hour and will trigger if the user goes over 60%. Now, you may have set a prior rule when the alert triggers when disk usage is above 80%. The on-call system must be trained to merge this similar event so that you are notified only once.

Differentiating the types of alerts

Every alert has its importance, but certain things affect the core systems’ availability and working. It is important to differentiate these alerts with the right key-value pair tags.

No SRE engineer would like to be woken at 2.00 am for a false alert or alert that can be attended the next day in the morning. So Alert management and putting in place features to mitigate alert noise is a crucial function in SRE.