There can be numerous ways to migrate data from a database to Big Query like exporting to CSV, Parquet files, direct DB connection, etc. So in this article, we will focus on two ways-

- Using Fivetran connector

- Using a python script

Fivetran connector

GCP provides numerous connectors for database connection/migration, one of which is Fivetran.

Using Fivetran we can establish a direct connection between Big Query and Oracle database for this we need to just configure it by providing the live hosting address of our database and its credentials,

Once verified then it’s good to go for data migration.

But using Fivetran connector is not free, it’s chargeable.

Using Python script

This method is the best if the Oracle database is at the local system and does not have a hosting address.

In this using python script, we will establish a type of connection between the Oracle database and Big Query, for that, we need to connect the python script with the oracle database using proper connection string and same way using the credential key provided by GCP we can establish a connection with Big Query.

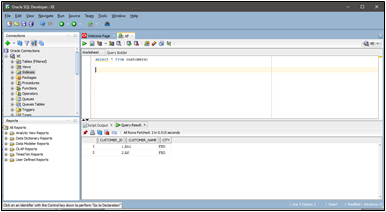

After connection establishment, we need to get the data from the Oracle database for which we perform a SQL query inside python script using Sqlalchemy and Pandas and the result will be the desired data stored in Dataframe.

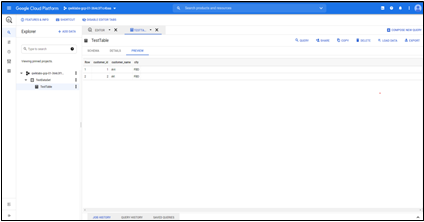

Using Panda’s “to_gbq” method we can easily send our data from the data frame to the Big Query table.

We can make separate text files for the connection string, SQL query, GCP Big Query credential and read them in python script for easy use. This makes it easier to transfer data; change the SQL script and run the python script, and your data will be transferred.